AI: Generated Art

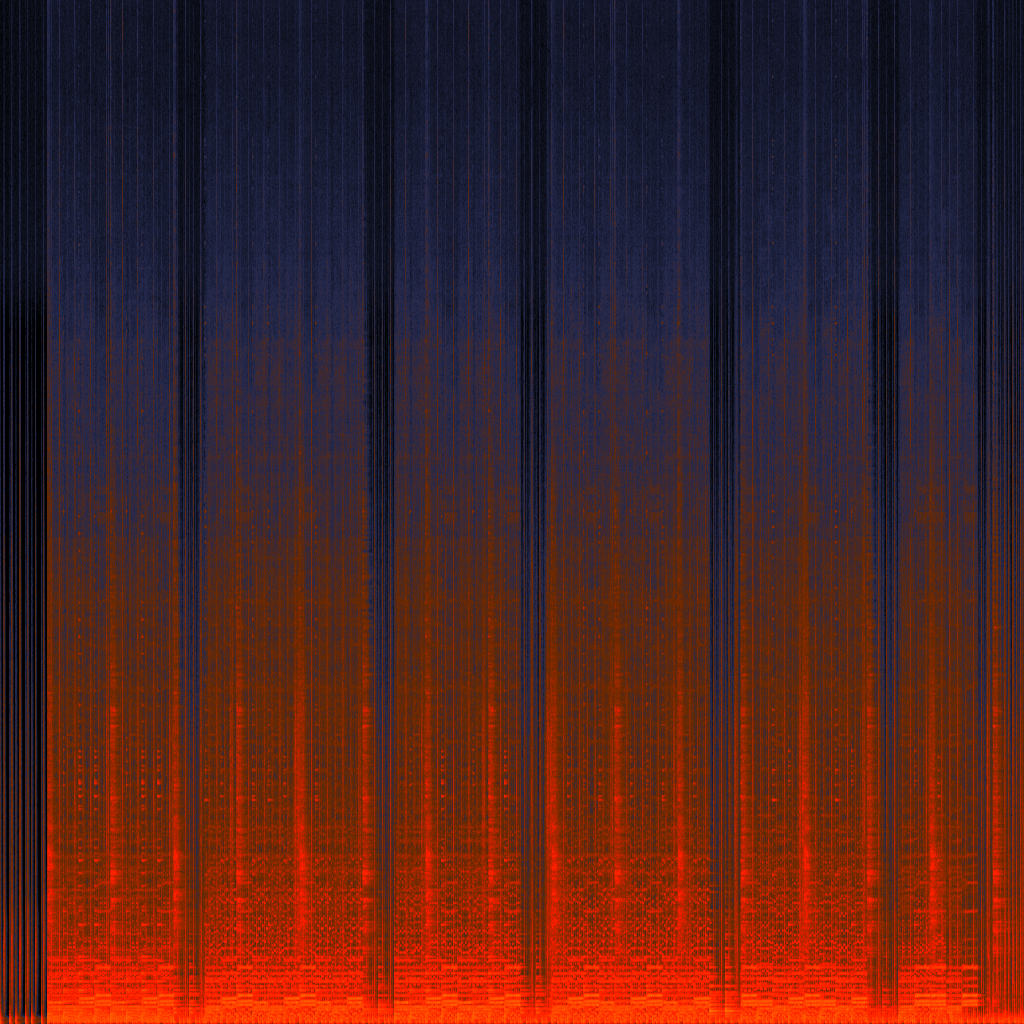

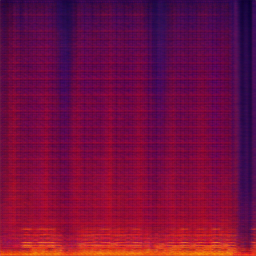

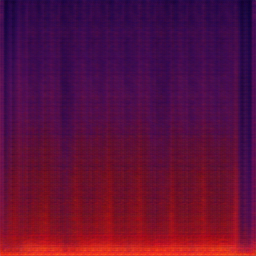

During my university studies, I explored the creative application of AI by working with the Pix2Pix model to translate audio spectrograms into illustrated-style visuals. I created and curated the training dataset using my own original artwork, pairing hand-drawn illustrations with corresponding spectrogram imagery to train and test the model. Through this process, I experimented with visual styles, data relationships, and model behaviour, investigating how machine learning can be shaped by human creative input and how sound, illustration, and generative systems can intersect within a design-led research context.

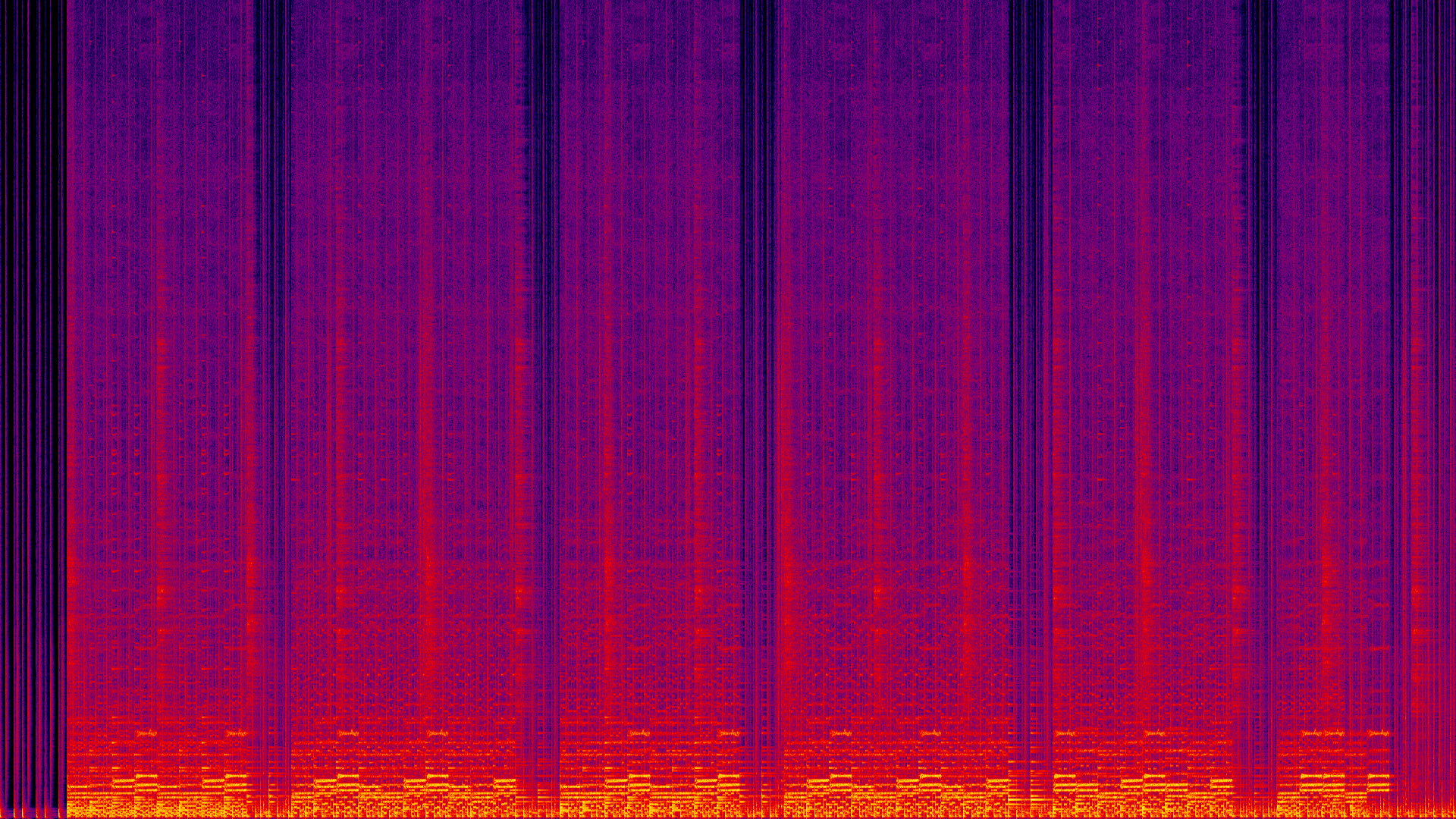

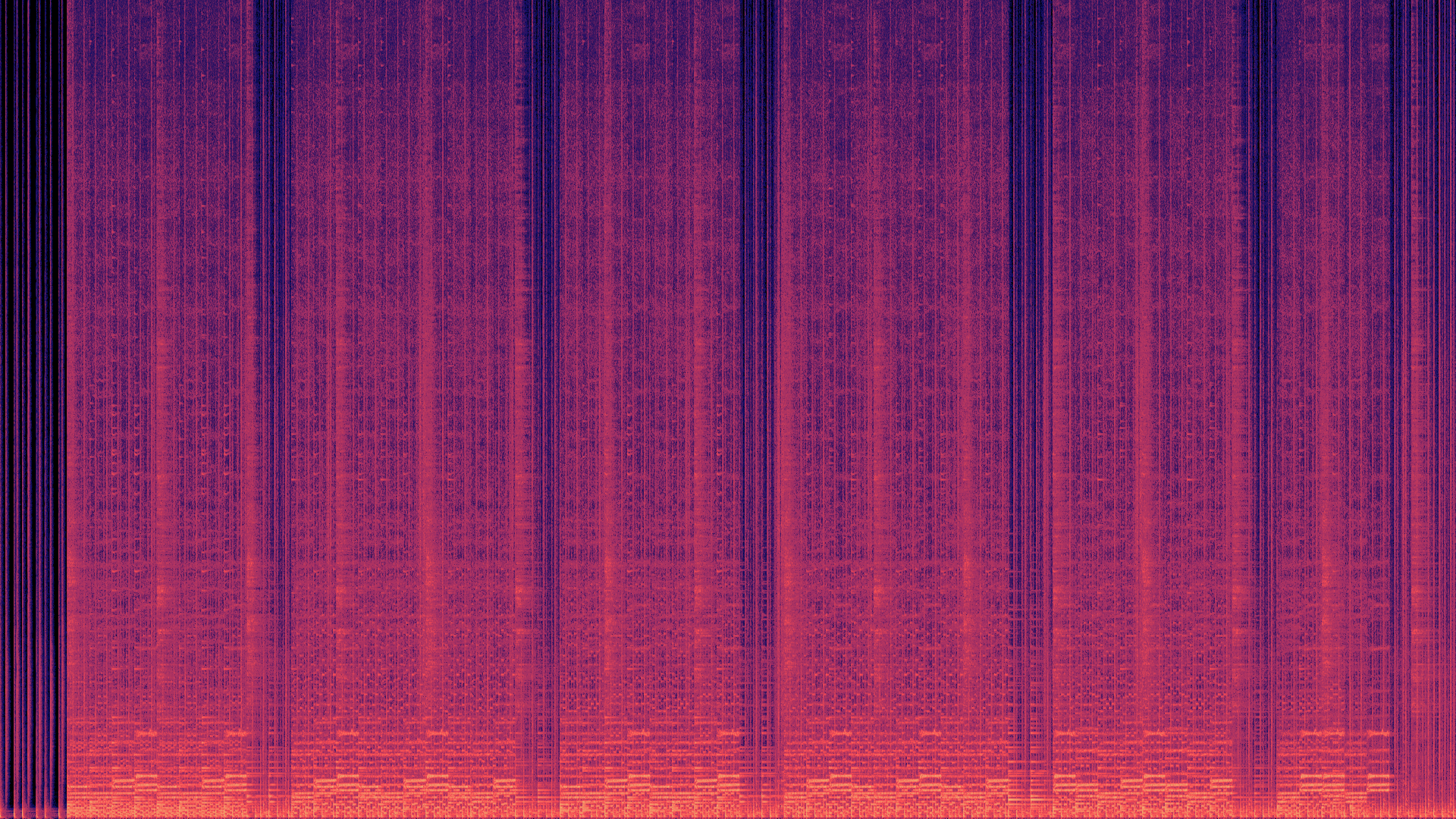

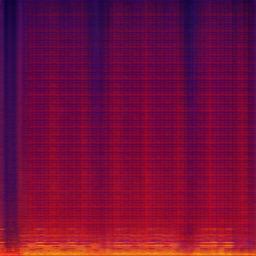

Spectrograms

Alongside the creative exploration, I taught myself the technical foundations required to realise the project. This included learning to code, generating and processing audio spectrograms, and programming and training the Pix2Pix model from the ground up. I composed the original music used to produce the spectrogram data and created all accompanying artwork, allowing full control over both the audio and visual inputs. Through iterative testing and refinement, I developed an understanding of how data structure, training material, and model parameters influence output, gaining hands-on experience in building and directing an AI system as part of a creative practice.

Generated Images

On a deeper technical level, I worked with data preparation, model training workflows, and iterative testing to refine the system’s outputs. This involved structuring paired image datasets, adjusting training parameters, and evaluating results to better understand how the model interpreted visual and audio information. I learned to troubleshoot errors, manage training limitations, and adapt the process based on observed outcomes, developing practical skills in debugging, optimisation, and computational problem-solving within a creative AI context.

NOTE: This piece is still a work in progress stay tuned for updates!